Understanding quantum computing

What is quantum computing?

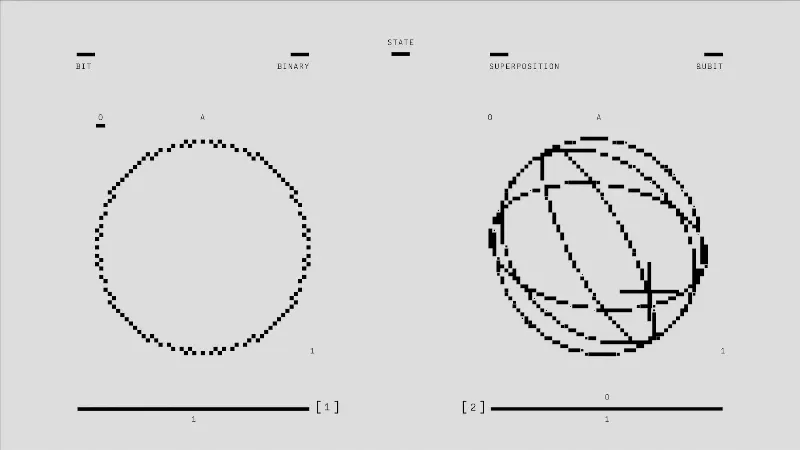

Quantum computing harnesses the principles of quantum mechanics to process information in fundamentally different ways than classical computing. While classical computers use bits as the smallest unit of data, represented as either a 0 or a 1, quantum computers utilize qubits. Qubits can exist in multiple states simultaneously, thanks to a property known as superposition, enabling quantum computers to perform many calculations at once.

How does quantum computing work?

The functionality of quantum computers relies on several key principles of quantum mechanics. In addition to superposition, entanglement is another crucial feature. When qubits become entangled, the state of one qubit can depend on the state of another, no matter the distance separating them. This allows quantum computers to process complex problems more efficiently than classical systems, particularly in tasks like optimization and simulation.

Quantum computers are still in the early stages of development, but they hold promise for various applications. For instance, they could revolutionize cryptography by breaking codes that are currently considered secure, or they could simulate molecular interactions for drug discovery, which is an incredibly resource-intensive process for classical computers.

This technology matters not only for its immediate applications but also for what it reveals about the limits of current computational paradigms. As researchers continue to explore the capabilities of quantum systems, discussions surrounding quantum computing often reflect broader themes in technology, such as the quest for more efficient problem-solving methods and the ethical implications of powerful new tools.

As we stand on the brink of a new computational era, the unfolding story of quantum computing invites curiosity about how it will reshape our understanding of information and its processing.

Hungry for more?

Explore thousands of insights across all categories.